SQL Database Recovery Case Study: IBM System x3650 M3

When this client’s IBM System x3650 M3 server crashed, they feared the worst. IBM had ended both production of and support for this particular model years ago, and if something were wrong with the server itself, it would mean either trying to find a vendor still selling the System x3650 M3, or buying a new model (and thus implementing headache-inducing changes to their infrastructure). Their business’s IT department confirmed that the problem lay in the hard drives within the server. That came as a relief.

But it was a small relief, because one other problem remained: How to get the data off of the crashed RAID-5 array. This server had contained a mission-critical SQL database for the client’s business. Fortunately, Gillware’s world-class RAID 5 data recovery experts were in our client’s corner to recover their SQL database.

SQL Database Recovery Case Study: IBM System x3650 M3

Server Model: IBM System x3650 M3

RAID Level: RAID-5

Drive Model: ST9146852SS (x4)

Total Capacity: 438 GB

Data Loss Situation: Two hard drives went offline and couldn’t be brought back online. IT department verified it was the drives that had caused the failure failed and not an issue with the raid system.

Type of Data Recovered: SQL Database

Binary Read: 100%

Gillware Data Recovery Case Rating: 10

IBM System x3650 M3 Hard Disk Failure Error

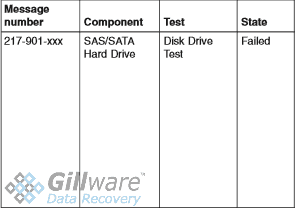

Our clients knew they were in trouble when the server’s DSA preboot tests spit out an error message at them. Error message 217-901 popped up, which informed them that one or more of the SAS hard disk drives in the four-drive array had failed to pass the preboot test.

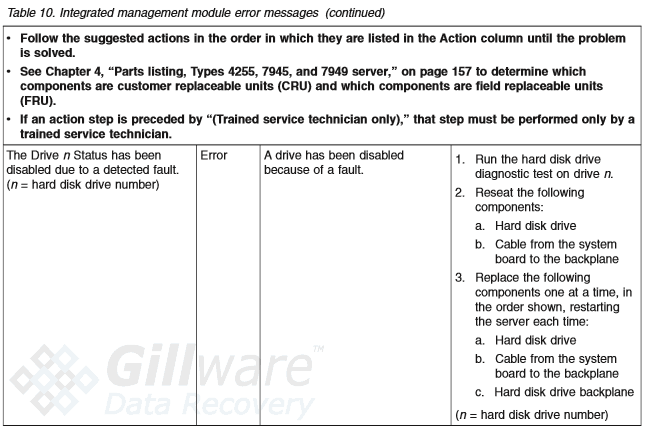

Doing further testing with the IBM x3650 M3 server’s integrated management module, our clients found that disks 0 and 1 had been disabled due to a detected fault. The failure of both hard drives—half the drives in the array—had crashed the server.

If only one hard disk drive had failed, our clients wouldn’t have been in nearly as sticky of a situation. RAID-5 arrays, due to their XOR parity setup, can bounce back fairly easily from single hard disk failure. But two or more drives failing is a recipe for disaster—though, thankfully, a disaster our RAID data recovery specialists have gotten quite adept at mitigating.

IBM System x3650 M3 Server Data Recovery

We’ve been recovering RAID arrays of all stripes and sizes since 2004, and we’ve gotten very good at the whole RAID recovery shebang. We get RAID-5 arrays in our data recovery lab at least once a week, from businesses, nonprofits, medical clinics, etc. of all sizes, from three drives to thirty.

Since RAID-5 spreads XOR parity data throughout the hard drives in the array, you can lose one hard drive with no (major) ill effects. When one disk vanishes, the server looks at the XOR parity data on the remaining disks. The server can use this data to completely reconstruct the missing data on-the-fly. And when the user inserts a new hard drive to replace the old one, the server uses that same parity data to turn the new drive into a clone of the old one.

Things go badly, though, when a second drive fails. The server can plug up the ensuing gaps from one failed hard drive, but not two. That is where we come in to clean up the mess.

Data Recovery Software to recover

lost or deleted data on Windows

If you’ve lost or deleted any crucial files or folders from your PC, hard disk drive, or USB drive and need to recover it instantly, try our recommended data recovery tool.

Retrieve deleted or lost documents, videos, email files, photos, and more

Restore data from PCs, laptops, HDDs, SSDs, USB drives, etc.

Recover data lost due to deletion, formatting, or corruption

SQL Database Recovery

To recover our client’s SQL database from their crashed IBM System x3650 M3 server, our engineers first inspected the two failed disks from the RAID-5 array. Both had suffered bugs in their firmware, the “operating system” of a hard drive that manages how the drive reads and writes data. With the right tools and expertise, our technicians could fix these bugs and create disk images of the failed drives.

One of the two failed drives had a few bad sectors on its platters, preventing our engineers from reading 100% of its contents (which would be ideal). However, RAID-5’s very XOR parity proves an unexpected ally in a situation like this.

Our RAID data recovery engineer Cody only needed three of the four hard drives to successfully reconstruct the array and pull off our client’s data. And what better drive to exclude than the one hard drive we could only read 99.9% of?

Sure, it was only a fraction of a percent missing—but you can never be too careful, especially when you’re trying to recover a SQL database. SQL databases are relational databases, and as a result, missing or corrupted data can have far-reaching consequences for the rest of the information in the database.

After rebuilding the RAID-5 array and pulling off the SQL database, our SQL database recovery specialists carefully took a look at the recovered data to make sure the database worked as intended and had no issues with data corruption. The database, our engineers found, worked perfectly. We shipped the recovered data back off to our client and rated this data recovery case a perfect 10.

IBM System x3650 M3 Server End-of-Life: Not the End of the World

Twice a year, IBM releases end-of-service notifications for various models of service. This is often deemed the “End of Life” for any model on the list. But while that moniker alone may be enough to inspire dread, all it means for a model to reach its EOL is that IBM no longer produces or fully supports the model (usually because they just released a newer model). Users don’t necessarily have to replace their server once it’s reached its end-of-life, though—they can purchase an extended service plan from IBM, or hire a third-party IBM support company. After all, if the server still works, why replace it? (It saves businesses and organizations the headaches of having to completely revamp their infrastructures to accommodate new models, too.)

The IBM System x3650 M3 reached its end-of-life in 2012, for example, when IBM released the System x3650 M4 to replace it. Yet our clients here kept using it for their SQL database all the way through 2016 and even a little into 2017 (in 2015, the System x3650 M5 came out). It served them faithfully all those years after IBM formally retired the model—in the end, the server crashed entirely due to hard disk failure. And that failure would have been just as likely to happen to any newer model of IBM server.

End-of-Life isn’t the end of the world. And if you pick a good data recovery company to take care of things when your server crashes, then neither is hard disk failure.