WannaCry and Global Cyber Security Threats: Y2K 2.0?

UPDATE: MARCH 29, 2018

While the initial storm from May 2017 may have long since subsided, WannaCry is still out there, still being passed around by malicious hackers. Yesterday, Boeing became the latest WannaCry victim as they fell under attack from what they called a “limited intrusion” that only affected a small number of systems. However, the fact that an intrusion made it as far as it did into such a large corporation just underscores what other big hacks and data breaches over the past year have already made clear:

All organizations, large and small alike, need to take cybersecurity much more seriously. Find out what you can do to protect yourself from ransomware or other cyber intrusions with Gillware’s ransomware prevention guide.

This article was originally published on May 24, 2017.

Unless you’ve been living under a rock for the past few weeks, you’ve probably noticed ransomware in the news. Most notably, you’ve heard of WannaCry, a ransomware computer worm that made many headlines in May 2017. This ransomware attack, starting on May 12, 2017, infected more than 230,000 computers in over 150 countries. Russia, Ukraine, India, and Taiwan suffered the most infections.

The UK’s NHS (National Health System) was also heavily affected by WannaCry. In England and Scotland, staff had to resort to tried-and-true technologies like pencil and paper. Unlike many ransomware viruses, which wriggle their way into the computers and networks through phishing and social engineering, the hackers behind WannaCry exploited a massive vulnerability in some Windows operating systems, including Windows XP, that allowed the worm to enter insufficiently-protected networks with astonishing ease.

This vulnerability had initially been discovered by the NSA and kept hidden. However, it became known to hackers after a group published a list of leaked NSA hacks and spying tools. Some cyber security professionals have laid blame on the NSA for hoarding such exploits for their own ends instead of alerting vendors to the security holes in their products. Regardless of how much blame may be placed on the NSA, though, the WannaCry ransomware attack ultimately had the reach it did because so many large and critical institutions had lax cyber security measures in place to prevent ransomware attacks in the first place.

Ransomware: A Booming Industry

Ransomware is a form of malware, or malicious software, that rapidly encrypts the user-created data on a computer or server and, as its name suggests, holds it hostage. The ransom is paid in Bitcoin. This untraceable digital “cryptocurrency” allows the hackers to receive payment without revealing their identities.

Over the past few years, the frequency and scale of ransomware attacks has grown. There are multiple reasons for the growth of this illegal “cottage industry”. Mainly, it’s become increasingly easier for hackers to get their hands on ransomware software and rake in money as their targets fork over the digital dough to get their files back.

For more information on how ransomware spreads, take a look at Gillware’s An In-Depth Look at Ransomware.

Is WannaCry Over? Not Quite

Fortunately, the initial spread of WannaCry was stopped in its tracks very soon. A cyber security researcher accidentally discovered a “kill switch” inside the virus that massively slowed its proliferation. In the following days, Microsoft released security updates for all operating systems. Even the aged Windows XP, which lapsed out of Microsoft support years ago but still sees use, got an update.

The initial push may have been stopped, but security experts are still worried that WannaCry could come back stronger than ever. And when that happens, we may not be so lucky to discover such an easily-exploitable “kill switch”. A stronger attack targeting hospitals, financial institutions, and educational institutions (ransomware’s current targets of choice), or even critical infrastructure such as power plants, could cause chaos in countries across the world… unless we prepare ourselves by beefing up our cyber security efforts.

Digital Deja-Vu

Stop me if you’ve heard this one before. A potentially globe-spanning threat that could render computers across the world inoperable… A threat which would spread through unprotected machines due to insufficiently-protected hardware and software… One of such magnitude and potential destructive force that computer experts across the world call for bold action…

That’s right—the last time you probably heard of this sort of talk might have been over 15 years ago. You may remember it as the time the world caught the “Millennium Bug”.

Data Recovery Software to recover

lost or deleted data on Windows

If you’ve lost or deleted any crucial files or folders from your PC, hard disk drive, or USB drive and need to recover it instantly, try our recommended data recovery tool.

Retrieve deleted or lost documents, videos, email files, photos, and more

Restore data from PCs, laptops, HDDs, SSDs, USB drives, etc.

Recover data lost due to deletion, formatting, or corruption

I Love the 90s: Y2K Edition

If you were alive during the 1990s (and you probably were), you might remember some of the furor over Y2K. There was quite a bit of it leading up to the New Year. Some people even thought airplanes would fall from the skies in mid-flight or ICBMs would whizz through the air, unbidden, the instant their clocks turned over. On the extreme end of the reaction story, religious fundamentalists whipped their flocks into apocalyptic fervor.

In 1999, the annual “Treehouse of Horror” Halloween special of The Simpsons even featured a story lampooning Y2K panic, in which every electronic device causes world-ending, cartoonish violence at the stroke of midnight when Homer unwittingly spreads a “Y2K” virus from his post at the Springfield nuclear plant.

Yet the instant the ball dropped brought no Armageddon with it. Planes kept flying. Hospitals kept running. Peoples’ electric razors didn’t come to life and start trying to decapitate them. Some people named Y2K “The Disaster That Never Was”. In later years, some would scoff at all the hue and cry raised over something so simple.

True, many fears spread about the year 2000 were more than just a tad overblown. But in spite of the overreaction and fear-mongering, Y2K was potentially a serious issue indeed. Because we as a society took proactive, preventative measures, though, we could head off whatever negative consequences could have resulted from it.

Why Was Y2K a Big Deal?

Misunderstanding over the true nature of Y2K ran rampant in the late 90s, fueling the apocalyptic “millennium bug” hysteria. In pop culture, Y2K often took the form of some sort of malicious virus, a technological bubonic plague, perhaps a Luddite avatar of the evils of technology or a judgment from God. But it was not a virus. Rather, it was simply an absurdly common software bug.

In the late 90s, computer experts from all around the world were very concerned about what would happen when the date changed from December 31, 1999 to January 1, 2000. At the time, computer memory in both mainframe computers and personal computers was still a scarce resource. Many computer programs across all industries and sectors only stored the date as two digits—the last two. For example, 1995 was just “95”, since those first two digits were a given, right? Except in a few years… those two digits wouldn’t be.

And so, as the year 2000 approached, computer experts feared that when the clocks switched over to the new year, these programs would see the 00 digits… and think it was 1900, not 2000. This would cause a whole host of database issues across the world, in both the public and private sector. Outages, failures, and glitches throughout infrastructure all over the world could result. John Hamre, then-Deputy Secretary of Defense under President Bill Clinton, warned that Y2K was “the electronic equivalent of the El Niño.” Hamre further warned that “there will be nasty surprises around the globe” if nothing was done to prepare.

And so we did something.

What We Did Before January 1, 2000

To prepare for the coming 21st century, computer systems all around the world had to be dragged kicking and screaming into… well, the 21st century. In the United States, the federal government passed the Year 2000 Information and Readiness Disclosure Act. Through coordination with FEMA and with the private sector, the government worked to inform people of the issues, monitor and assess the progress made in “Y2K-proofing” the nation’s infrastructure, and prepare and regulate contingency plans. The United States also had a joint program with Russia to mitigate the possibility of false positives in either nation’s nuclear self-defense systems.

Similar countermeasures took place, spearheaded by other governments, around the world. Over 120 countries collaborated in the International Y2K Cooperation Center to minimize any adverse effects of the date change on the global society and economy.

Now, this probably won’t surprise you. But at this point, a lot of infrastructure in the banking industry, the healthcare industry, the government, etc. was running on very old hardware and software. In many cases, Y2K-proofing meant metaphorically throwing these aged systems in a ditch and putting a bullet in their heads.

The total cost of all this preparation—of replacing outdated hardware and software, rewriting code, and overseeing it all—was over $300 billion. Few would have footed their respective portions of this daunting bill, were it not for the looming specter of global catastrophe. They would have allowed their aging systems to keep on chugging forever. Or at least, until everything inevitably fell apart for various, completely different reasons.

What Would Have Happened Without Proactive Measures?

In the aftermath of Y2K, the hysteria fizzled out. Some of the more “prepared” households rang in the new year with nothing but dozens of cans of Spam to show for their efforts. Many doubted that Y2K had been that big of a deal at all. Perhaps we could have just sat by and done nothing and still come out fine.

We can only speculate on what would have happened had we stood by and done nothing to Y2K-proof our infrastructure. If anything, though, the few minor bugs that popped up on January 1 in insufficiently-Y2K-proofed systems around the world could give us a hint of what we might have seen on a wider and more severe scale.

Speculating On Possible Effects of Y2K

In the UK, a hospital sent out incorrect risk assessments to over 100 pregnant women. In Japan, some models of mobile phones started deleting new messages instead of old ones to free up memory. Radiation-monitoring equipment at some nuclear plants failed for a brief period of time. The US Naval Observatory briefly gave the date on its website as “1 Jan 19100”. In casinos across America, 150 slot machines stopped working.

These and other Y2K-related glitches were all far, far from world-ending catastrophes. But take these minor glitches and imagine them not so minor—and far more widespread. Of course, the world wouldn’t have ended, and nobody would need their bunkers full of Spam. But a lot of people around the world could have suffered.

At the very least, damage done to (potentially) millions of patient medical records could lead to all kinds of bad effects. Many would not become immediately apparent. For example, imagine if the Y2K bug had caused hospitals across America to lose their accurate vaccination records for millions of children. You can see what effect that would have just by examining areas in the United States where anti-vaccination sentiments have led to outbreaks of diseases thought to have been eradicated. Now imagine it more widespread: an epidemic.

Of course, maybe nothing would have happened had we done nothing. But still, the measures taken in the face of that threat undoubtedly left the world a better place than it had been.

Are Future IT Security Threats Like WannaCry a New Y2K?

We’ve seen lots of new and surprising attacks in recent years on digital infrastructures. These attacks have proven ambitious and shockingly effective. Fortunately, so far we’ve stopped them in their tracks relatively quickly and with little lasting damage.

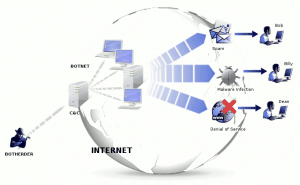

WannaCry should come to mind, as it’s still fresh in people’s minds. But you might also remember the massive botnet attack that caused problems for many people across the US, enabled by hackers exploiting the practically-nonexistent security measures within the “Internet of Things”.

Ransomware, botnet attacks, and infrastructure hacks to come could be an even more disastrous threat to our global society than Y2K. We depend on computers and the Internet even more than we did in 1998. The Internet alone is a major piece of modern infrastructure. Something that could slow down or render inaccessible wide swaths of the Internet for millions of users for an extended period of time could be right around the corner, and the economic damage alone could be devastating. Human civilization wouldn’t end unless things went south from there in an awful hurry. But it would still hurt a lot.

But no one is going to start buying cans of Spam for their bunker or joining doomsday cults because of massive, globe-spanning ransomware attacks. For one, we’re probably still a little burned-out on technological-apocalypse-hysteria from the Y2K bug itself. Until someone invents “Skynet”, people aren’t likely to go into apocalyptic panic over computer technology anytime soon. Secondly, we’ve also got a million other scary things that could potentially send human civilization back to the stone age and occupying our minds. Your particular nightmare of choice, of course, varies depending on your political leanings.

Same Vulnerabilities, New Threat

Let’s look at the technological state of the union in the mid-to-late 90s, before the coordinated efforts to Y2K-proof everything.

Major important elements of our social and economic infrastructure ran on outdated technology—hardware we should have given the boot long ago. No one wanted to update their systems. Firstly, it was expensive. Secondly, as the saying goes, if it ain’t broke, don’t fix it.

It took government initiative and massive cooperation between nations of the world—and, of course, the threat of global devastation—to light a big enough fire underneath the public and private sector to finally put long-outdated systems out to pasture.

Now, almost two decades later, where are we now? In many ways, right back where we started.

Hospitals and government institutions around the world still use Windows XP in their systems (as well as the vast majority of ATMs), despite the operating system being nearly 16 years old and no longer supported by Microsoft (with the exception, of course, of recent history, in which the threat posed by WannaCry was so grave that Microsoft made a rare exception and issued an emergency patch for Windows XP).

Almost 2 decades after initial preparations started for Y2K, many businesses and institutions are right where they were back in 1997—in desperate need of modernization. Only this time, the threat really is malevolent, designed by humans with infinite capacity for violence. And it could wreak far more havoc than a simple bug.

2017 may end up a repeat of 1998—the year governments and institutions around the world fully realized the need to band together and begin future-proofing our burgeoning digital infrastructure in earnest.

Preventing the Next WannaCry

Ransomware hackers have singled out the healthcare industry, the financial industry, and educational institutions as prime targets. This is simply because they are the most vulnerable. They’re the ones running operating systems old enough to start learning how to drive. They’re the ones with outdated and insufficient IT security infrastructures. The people at the top of the chain of command in these organizations are loath to bite the bullet. No one wants to commit to an IT infrastructure overhaul that could take years and millions of dollars. They’re the ones that are so big and slow-moving that putting in the effort to modernize would require herculean effort.

These portions of our society also happen to be really, really important and in dire need of protection. And ransomware hackers could even potentially target the computer systems of hydroelectric dams or nuclear power plants, if they saw need to.

What Can We Do?

We can point fingers all day. But the fact of the matter is simply this:

These ransomware attacks succeed, and continue to succeed, because too many people do not take cyber security seriously enough. The vast majority of WannaCry’s successful intrusions probably could have been halted together. The victims just needed a firewall guarding their network from unauthorized intrusions.

WannaCry, botnet attacks using IoT devices, and other big hacker shows of force should prove as warnings that we need to take IT security much, much more seriously. Large corporations and government institutions playing key roles in the maintaining the many aspects of our complex, interconnected global society must understand that they are especially at risk. Like with Y2K, we need incentives strong enough to overcome large businesses’ and sprawling institutions’ IT malaise and resistance to modernization.

Speaking in broad strokes, this is what we need to see more of to prevent future global hacks like WannaCry:

- More education on phishing and social engineering hacks. This wasn’t the main propagation vector for WannaCry. However, it is still a commonly-used tool in the hacker toolkit.

- Committing to stronger network security across the board. This includes having firewalls to protect sensitive networks, using secure VPNs to access networks remotely and in-office, and having multi-factor authentication enabled to prevent unauthorized access (even if a user’s passwords do become compromised).

- Keeping up-to-date on O/S security updates. When Microsoft wants you to update your computer, it can be a pain. But sometimes that update does something really important—like patching a gaping hole in your operating system’s security.

- Bidding farewell to extremely outdated hardware and software. Of course, no one wants to switch to a whole new operating system on Day One of its release. But no matter how convenient, the opposite extreme—staying on Windows XP long past the point of practical obsolescence—is perhaps even more foolish.

- More regulations to impose more security measures on IoT devices. IoT gadgets have grown like weeds, with companies producing everything from Internet-enabled refrigerators to Internet-enabled salt shakers. These devices have barely any security measures to speak of, leading to the attack we all remember so fondly. Something needs to keep the creators of these devices in line. You shouldn’t have to live in fear of your smart fridge becoming a Trojan horse.

What Can I Do?

If we aren’t willing to take proactive preventative measures like we did with Y2K, we will be stuck playing on the back foot. We’ll always be one step behind the bad guys—and forced to wait until after we’ve fixed the damage done to put preventative measures in place. When you playing a purely reactive game, you wait until after the car hits the kid to install a sorely-needed stop sign. When it comes to nation- and world-spanning IT security threats, we need to start thinking harder about the future. As with many other issues facing our world, if we don’t take responsibility now, we will regret it later.

Perhaps the wheels have already started to move. Recently, after post-WannaCry criticism of the NSA’s practices, Senator Brian Schatz introduced the Protecting Our Ability to Counter Hacking Act of 2017, or PATCH act. This act could prevent the hoarding of known software security vulnerabilities by the state. Of course, it’s just one potential band-aid on one aspect of our situation. There’s no “magic bullet” to our cyber security woes. Global malware threats require a far more holistic remedy.

“Let everyone sweep in front of his own door,” Goethe once said, “and the whole world will be clean.” Yet it will take more than that to get us out of this mess. Our problems are wide-ranging, deep, and systemic. But for now, clean up your own front door. Work hard to make your own IT security measures as robust as you can.

For tips on protecting yours business or organization from ransomware, consult Gillware’s Ransomware Prevention Guide: